One of my vices is trying to play musical instruments and a lot of musicians suffer from ‘option paralysis’. That is to say, when presented with the infinite flexibility of DSP-based digital options, they freeze and drop back to simple analogue equipment, with fewer options. Facing the need to write today’s Display Daily, with the huge choice of topics from Display Week, I’m suffering from this malaise!

However, after some rumination, I am going to cover the keynote talk given by Meta, that Barry identified last week (SID’s 60th Anniversary – Optimism in the Face of Falling Panel Prices, Demand and Profitability (Part 1)) as including a key chart for the understanding of how far we still have to go in getting to where we need to be in near-to-eye devices. The talk was given by Joe O’Keeffe, VP of Research at Meta’s Reality Labs for the last six years (and a display veteran after five years with InfiniLED, a microLED company that was bought by Meta).

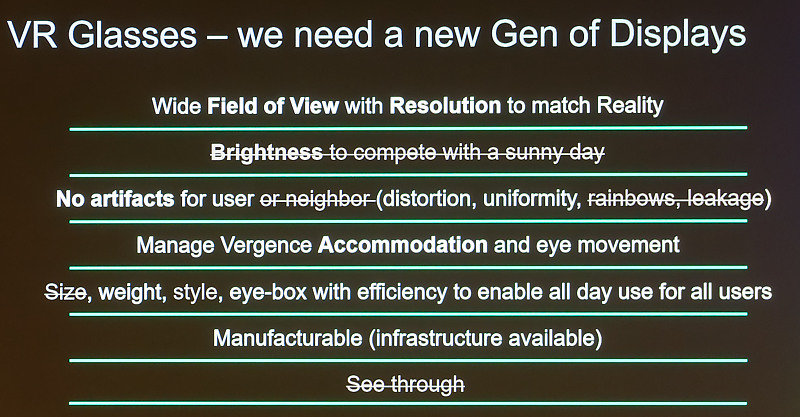

As he said, good AR can ‘bring superpowers’ by enhancing lots of aspects of human capability and can replace the smartphone display, but it has to be a better device for the user. Without good glasses, he believes, there is no Metaverse – the glasses are the door to the Metaverse. The perfect displays for this application don’t currently exist. He listed the attributes that he believes are needed.

VR is a bit closer partly because the needs are a subset of the AR requirements. O’Keeffe showed this slide which takes away those points that are important for AR, but which are not an issue for VR and he talked through the challenges. Eventually, he said, the two categories may converge into single devices, because of the number of similarties, but not today.

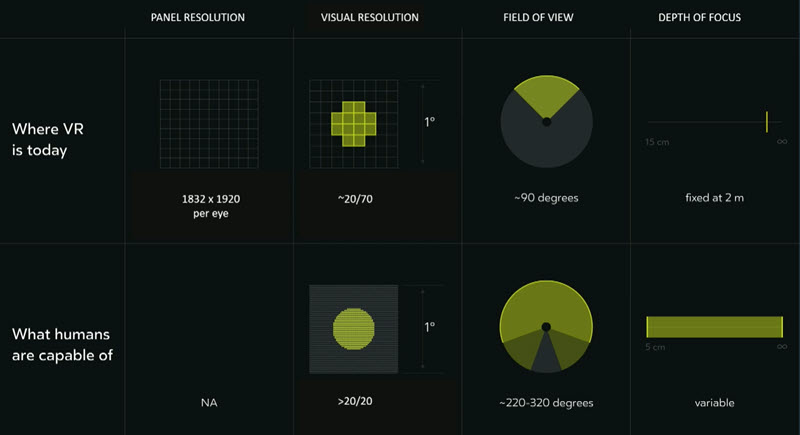

He then compared what humans can do with where displays are at the moment. There’s a big disparity – we’re three times off in resolution, three times off in field of view and we only have a fixed focal length of image available.

Further, you need to track the user’s gaze as you need that information both for optimising the operation of the displays, but also for use in avatars so that they more accurately represent the wearer. He briefly explained what Meta has done to look at the challenges of different focal depths using motors to move the displays and lenses and using LC-based lenses. A stack of six LC-based lenses can give 64 different focal planes.

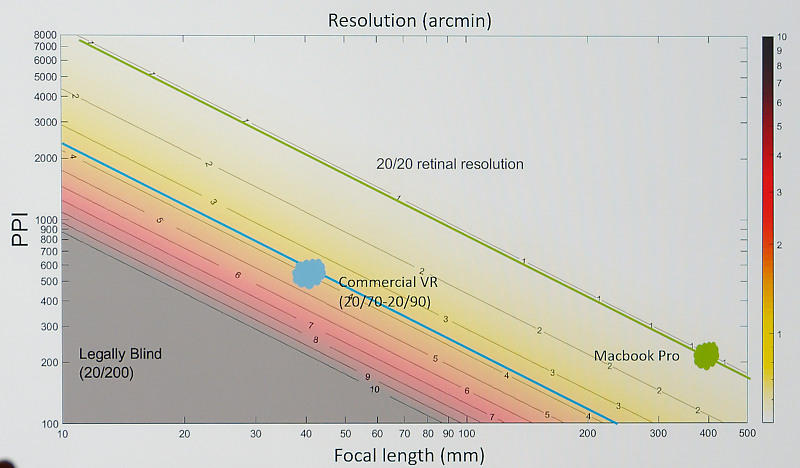

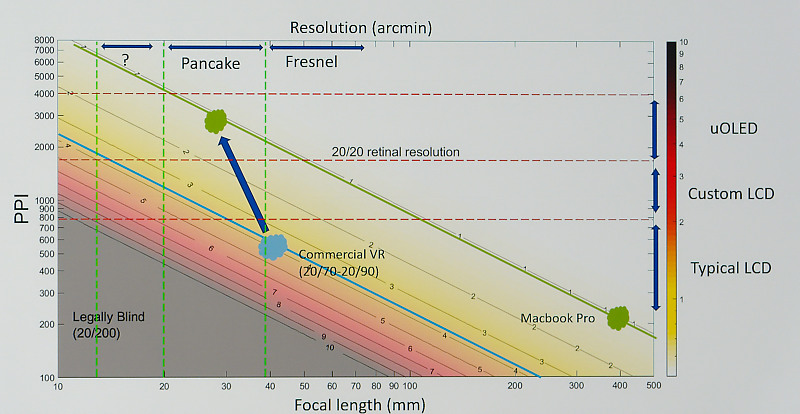

He explained the screen door effect and said that ideally you have big display pixels that reach each other. He then introduced the big chart that Barry referred to. The chart shows the ppi needed for different resolutions at different focal lengths. Choosing a level of resolution and a focal length, you can read off the ppi needed.

The current state of the art is around 20/70 to 20/90 vision and around 500/600 ppi and the aim at the moment is to get to 20/20 vision at around 30mm focal distance. To get to that point, you need to move to different display technologies and he overlaid them on the chart. For the next level of performance, you need different optics as well and he said that the next Meta target is based on microOLED displays with 3,000 ppi with pancake optics to reduce the focal length.

The long term aim is to get to the top left corner, which would mean really high resolution as well as shorter length to minimise the focal length and achieving lighter and smaller glasses.

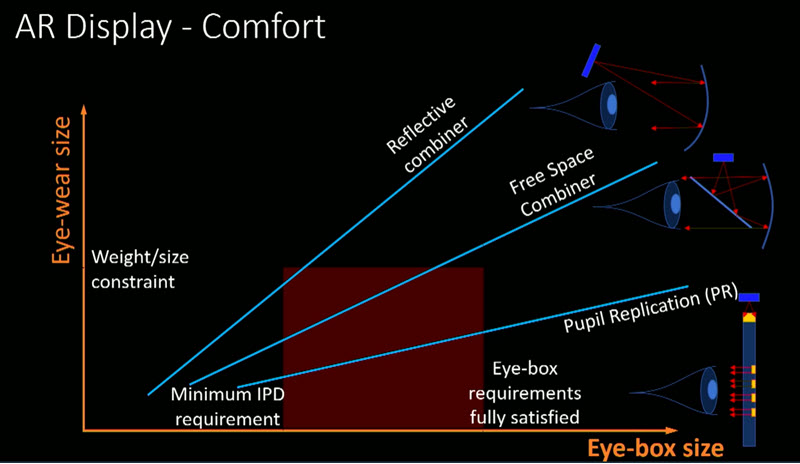

Turning to AR, there are three types of architecture of reflective combiners, free space combiners (birdbath optics) and what he called pixel replication or waveguide-based solutions. In the end if you want to get the large eye box and small size, that becomes the only option. O Keeffe then looked at the way that waveguide AR displays work. It’s great in terms of size and weight, but efficiency is extremely low. There seem to be some technical solutions to different optics and displays, often the supply chains don’t exist.

O’Keeffe said that a ‘tsunami of innovations’ was needed to enable earlier display breakthroughs such as the introduction of notebook PCs and he invited SID delegates to join with Meta in trying to do the same for AR & VR headsets with optimal display solutions. (BR)