The Society for Information Display’s Detroit Chapter sponsored its 23rd Annual Symposium on Vehicle Displays in Livonia, Michigan, on September 27 and 28. This year’s edition was called “Vehicle Displays and Interfaces 2016.”

Attendance jumped to 400 people, up from 300 last year, and the organizers found a new venue, the Burton Manor Conference Center, to accommodate the attendees, in addition to the 60 exhibitors that included many Tier 1 suppliers. The Conference Sponsors were Denso, Continental, Yazaki, Radiant Vision Systems, Sharp, Visteon, and Tianma NLT USA.

The technical conference extended over almost two days, which we cover in this article. An accompanying article discusses the exhibition.

Jennifer Wahnschaff delivering the keynote address at the 2016 Vehicle Displays Conference. (Photo: Ken Werner)Jennifer Wahnschaff (VP of Continental Automotive’s Instrumentation and Driver HMI for the Americas, Interior Division, Auburn Hills, Michigan) kicked off the conference with her keynote address, “The Road Ahead; What Do Future Consumers Want?” She referred to her team’s research to give this four-part answer:

Jennifer Wahnschaff delivering the keynote address at the 2016 Vehicle Displays Conference. (Photo: Ken Werner)Jennifer Wahnschaff (VP of Continental Automotive’s Instrumentation and Driver HMI for the Americas, Interior Division, Auburn Hills, Michigan) kicked off the conference with her keynote address, “The Road Ahead; What Do Future Consumers Want?” She referred to her team’s research to give this four-part answer:

- Diversification

- Autonomous operation

- Electrification

- Connectivity

She commented that electrification might fall off the list, given the slow penetration of electric vehicles. This comment provoked pushback during the Q&A, with the questioner arguing that the technology for making a true general-purpose electric car and a substantial recharging infrastructure are only now coming on line, and that penetration will now increase significantly.

With the 238-mile-per charge, battery-electric Bolt appearing in Chevrolet showrooms by the end of this year, increasing market penetration is a safe bet. The presumably comparable Tesla 3 is not scheduled to go on sale until late 2017 or early 2018, but approximately 400,000 customers have put down $1000 (refundable) each to pre-order their cars. So a second sales surge is guaranteed.

In her talk, Whanschaff said her team is doing extensive consumer persona research, asking what kind of experiences people want to have in their vehicles. She noted that “Not everyone is always in the same persona.” If you’re in your commuting-to-work persona, your expectations may be very different than if you’re going on a family vacation or if you’re a plumber driving from one repair call to another.

If a car is autonomous what is the buyer appeal?

If a car is autonomous, how do manufacturers appeal to buyers? As Wahnschaff put it, “If I don’t have to drive my car, what can my car do for me?” To satisfy customers, “We need a holistic HMI approach.” And what is that? Wahnschaff defined a holistic human-machine interface (HMI) as “a system-overarching approach, consisting of interconnected hardware and software components, which dynamically considers the user’s preferences, needs, condition and environment.” A holistic HMI will lead “to a safer and more intuitive driving experience with an enhanced joy of use.”

Wahnschaff said that auto makers want more and larger displays for autonomous vehicles because infotainment and communication will be even more important than they are today. More displays will appear in interior products such as head-up displays, rear- and side-mirror displays, instrument clusters, and center displays.

“More and more interest is being shown for head-up displays” by auto makers, Wahnschaff continued, and augmented reality will show “drivers” what the car actually sees and knows, thus enhancing driver confidence, as well as indicating to the driver why the car wants to hand control back to him or her. She noted that Uber establishes a great deal of trust with its users by presenting the user with driver identity, time to pick-up, and cost, all before the trip is requested. She ran a clip from a late-night talk-show in which a celebrity told how she ordered a Uber car to take her to a hospital to deliver her baby because she had more confidence in Uber picking her up promptly than she had in an ambulance.

There is a great deal to be said about automotive connectivity, but a couple of figures from Wahnschaff are telling: There are 210 million connected cars on road today, and this number of will double by 2021. The Internet of Things (IoT) will contain more than 50 billion objects by 2020, she said.

Wahnschaff concluded by saying that a multi-modal (touch, voice, gesture, etc.) HMI approach is key to a safer, effective, and pleasurable driving experience. It will include a reconfigurable panel for extended personalization, increased safety with situation-specific warnings using functional lighting, seamless connectifity with the cloud and personal devices, and augmented reality content for HUDs. All of this will support a major paradigm shift of the car to an “entertainment and communication spot.”

Displays are Growing in Volume and Size

In Session I, devoted to markets, Brian Rhodes from IHS Automotive, reported IHS’s research that indicates displays are growing in volume and size, buttons are disappearing, that the HMI modality “preference is consolidating around touch and speech,” and that center-stack displays are consolidating around diagonals of seven to eight inches. Automotive AMOLEDs will be in production in 2018, he said, and there is a strong trend toward capacitive touch replacing buttons.

In Session I, devoted to markets, Brian Rhodes from IHS Automotive, reported IHS’s research that indicates displays are growing in volume and size, buttons are disappearing, that the HMI modality “preference is consolidating around touch and speech,” and that center-stack displays are consolidating around diagonals of seven to eight inches. Automotive AMOLEDs will be in production in 2018, he said, and there is a strong trend toward capacitive touch replacing buttons.

Rhodes said IHS research indicates modular center-stack systems will dominate between now and 2022. During the Q&A, an audience member said that integrated systems will be important for luxury models; while modular systems will be the norm for lower-cost models. Rhodes agreed.

Jennifer Colgrove, Touch Display Research, identified Advanced Driver Assistant Systems (ADAS), the touchless HMI market, smart windows, and OLED lighting as other hot trends for 2016 and 2017. She projected that the market for touch modules (for all applications, not just automotive) would grow from about $28 billion in 2016 to about $36 billion in 2020. Among the many new opportunities for displays in automobiles is a pillar display combined with an external camera to render one of today’s blind spots “transparent.”

Colgrove noted that ADAS is big growth market, and after-market ADAS is a large opportunity. One could imagine a decline in sales of used car sales because they lack advanced electronics. A robust ADAS industry could moderate such a trend.

Touch Perception in the Car

In Session 3 on touch and interactivity Jonathan Kahl, Senior Human Factors Engineer at 3M Corporate Research Laboratories, reported on extensive research done by him and his team in “Dimensions of Human Touch Perception that Influence Customer Preference.”

Kahl noted that the perceptual dimensions of texture are roughness, softness, stickiness, and warmness, and these dimensions must be understood in the context of perception-action cycles to appreciate how they affect user preferences for touch devices. He commented that scannng velocity, contact force, and friction have only minor effects on perceived roughness, and that softness is “…unaffected when the velocity and force of application are randomized. ” If a liquid has the same temperature as your skin, you will not perceive it as wet.

When the results are combined, said Kahl, “perceived stickiness, perceived roughness, and coefficient of friction can be used to reliably predict haptic material preference….” Boiling it down even more, “…track pads and other touch devices should not have slip-stick, should have some roughness, but not a lot.”

Josh Bartlett of Optical Filters USA and Chris Ard of Touchnetix, Ltd, (Fareham, UK) presented the TouchNetix approach to industrial PCAP in “Force Sensing Capacitive Touch Screen Technology.” Bartlett observed that PCAP’s primary problem is its lack of an inherent feedback mechanism for assuring the user that he has “touched” the panel within the intended area and has successfully actuated the system. The authors conclude that the incorporation of integrated force sensing along with pre-selection haptics solves the problems and that the Touchnetix PressScreen is an effective implementation for automotive applications.

Steering Wheels Getting Too Complex

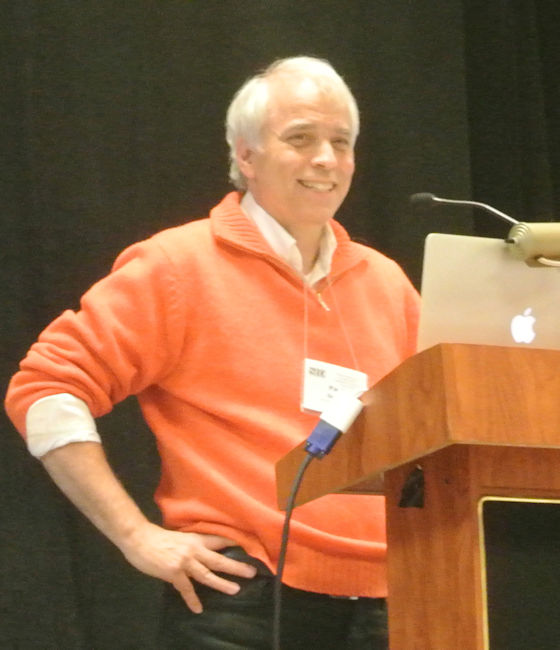

Jochen Huber – SynapticsJochen Huber and colleagues from Synaptics, Switzerland, presented “Enhancing Touch Control on the Steering Wheel with Force Input.” Hubner showed a photo of a four-spoke steering wheel on BMW E23 (7 Series, 1977-1986), which contain no buttons at all except for the horn. He then showed a current Jaguar XF steering wheel, which has 17 visible buttons on the front side of the wheel, plus two shift paddles. Not unreasonably, he observed that the “…increase in number of features challenges scalability [with] increased cognitive load, complex spatial mappings, [and] difficult learning curve.”

Jochen Huber – SynapticsJochen Huber and colleagues from Synaptics, Switzerland, presented “Enhancing Touch Control on the Steering Wheel with Force Input.” Hubner showed a photo of a four-spoke steering wheel on BMW E23 (7 Series, 1977-1986), which contain no buttons at all except for the horn. He then showed a current Jaguar XF steering wheel, which has 17 visible buttons on the front side of the wheel, plus two shift paddles. Not unreasonably, he observed that the “…increase in number of features challenges scalability [with] increased cognitive load, complex spatial mappings, [and] difficult learning curve.”

Huber concluded that a solution is enhancing touch with force input on the steering wheel with Synaptics ForcePads. He observed that force input is already available on many mobile devices, and that force permits an “increased richness of touch interaction.”

The authors investigated users’ ability to discriminate different force thresholds, force threshold difference for multi-level force input, and recommended tolerances for force sensing on touchpads with a 16-participant controlled study. They concluded that there should be a maximum of two force levels for discrete actions, and that activation thresholds should be based on an absolute force difference of at least 525 grams. Accuracy requirements for a force-enabled touchpad can be relaxed to ±15% for steering-wheel interfaces. In the future the authors intend to compare multi-level force input in different areas of the vehicle.

HMIs in Automated Driving

Bryan ReimerBryan Reimer of the MIT AgeLab and the New England University Transportation Center gave an invited address entitled “Evolving HMI Assessment Toward a Multi-modal Vision in an Increasingly Automated Driving Experience.” Over the past four years, Reimer said, his team conducted nine extensive field studies of seven embedded vehicle systems and a portable smartphone. The study encompassed 500 subjects and 10,000 task interactions, considered a wide range of physiological and performance metrics, and coded glance data for over 12,000 miles of driving. In addition, the team performed simulation studies of a number of portable and wearable technologies.

Bryan ReimerBryan Reimer of the MIT AgeLab and the New England University Transportation Center gave an invited address entitled “Evolving HMI Assessment Toward a Multi-modal Vision in an Increasingly Automated Driving Experience.” Over the past four years, Reimer said, his team conducted nine extensive field studies of seven embedded vehicle systems and a portable smartphone. The study encompassed 500 subjects and 10,000 task interactions, considered a wide range of physiological and performance metrics, and coded glance data for over 12,000 miles of driving. In addition, the team performed simulation studies of a number of portable and wearable technologies.

Among their findings:

- Voice interfaces are often multi-modal — you may look at a screen to confirm your phone voice dialing — but all implementations are not the same. An important safety metric turns out to be off-road glance time. Data from voice-dialing systems in current automobile models showed significant differences.

- Reimer proposed a significant revision to the traditional model of demand dimensions to make it more suitable for today’s multi-modal systems. The new model recognizes that the traditional goal of distraction mitigation must now be replaced by attention management. We no longer have only one task from which must not be distracted; we now have many attentional demands that must be managed in a responsible way.

Attention Management is Needed

The AHEAD Consortium — which consists of MIT, Denso, Honda, Jaguar, Land Rover, Panasonic Automotive, and Touchstone Evaluations — is looking at the spatial and temporal characteristics of tasks, and a framework in which demand can be optimized across the visual, auditory, haptic, vocal, manual, and other directions by considering the relative costs and benefits of different input, output, and promising modalities. AHEAD is also looking at the interactions between secondary tasks and the broader operating environment. The attention management approach seeks to consider the demands on the driver, active safety systems, and other higher-order forms of automation as a whole. There are a lot of conclusions from the work so far:

- Visual demand in multi-modal interfaces is NOT the same as visual demand in traditional visual-manual interfaces.

- Even with HUDs, the role of task-switching is significant.

- “Display concepts to support driver awareness is going to become very important.”

- Drivers who use safety technologies forget they don’t have them when they switch cars. “As we drive less we will become less capable drivers.” Self-piloting cars separate “miles driven from miles ridden.”

- “Product innovation must be treated as a process rather than a destination” because users won’t be able to make the behavioral changes in one leap, even if systems are ready.

Panel Discussion Looks at the HMI

Next, a panel discussion on HMI Evolution was moderated by Tom Seder (General Motors Global R&D). Here are a selection of direct and indirect quotes from the panel members and the audience.

The panelists, from left to right: Thomas Seder (GM), Linda Angell (Touchstone Evaluations), Paul Green (U. of Michigan), Brian Rhodes (IHS Automotive), Omer Tsimhoni (GM), and Silviu Pala (Denso). (Photo: Ken Werner)

The panelists, from left to right: Thomas Seder (GM), Linda Angell (Touchstone Evaluations), Paul Green (U. of Michigan), Brian Rhodes (IHS Automotive), Omer Tsimhoni (GM), and Silviu Pala (Denso). (Photo: Ken Werner)

Linda Angell (President & Principal Scientist, Touchstone Evaluations):

- “For safety you want to encourage the driver to be looking forward as much as possible.”

- Displays can enhance human-machine cooperation in all of these areas: clearly defined roles of user and machine/automation; shared goals and understanding based on “mental models’ in the domain of operation; communication about actions, states, transitions, and more; trust; reliance.

- Roles and goals of user and automation change at different levels of automation. So do the user’s information needs.

- The relationship between user and utomation should be engineered as explicitly as any part of the vehicle system.

Brian Rhodes (IHS Automotive) said:

- “Autonomous vehicles and HMI are converging.”

- “As soon as we have an HMI that forces the driver to take over in less than 1 second, then L3/L4 will work.” (But there are serious reservations about whether humans can be relied upon to do that with adequate situational awareness. –KW)

- “Ride-hailing will account for 25% of all miles driven globally in 20130, up from only 4% today.”

Omer Tsimhoni (General Motors Global R&D) is now looking at 2022, 2023, 2024 models at GM. A new option rarely erases all of its predecessors. An L4 Uber is not a car; it’s a taxi. Such a vehicle may be driven 50% of the time instead of the 5% typical of a privately-owned vehicle today. The Uber taxi would be more expensive than a private vehicle but the higher duty cycle justifies it.

CASE is a mnemonic for connectivity, autonomous, shared, and electric, which are the customer expectations for next-generation vehicles. Among the new display applications possible in such vehicles are transparent displays on windows and external displays for communicating with pedestrians or other vehicles.

We must go from HMI to HM Cooperation, is Tsimhoni’s view, as others here have said.

Paul Green delivering his presentation at the panel session. (Photo: Ken Werner)Paul Green University of Michigan) said he would try to draw the big picture, and focused on the various questions that must be answered at every stage of a trip in an automated vehicle, from pre-trip to post-trip. Among his many questions:

Paul Green delivering his presentation at the panel session. (Photo: Ken Werner)Paul Green University of Michigan) said he would try to draw the big picture, and focused on the various questions that must be answered at every stage of a trip in an automated vehicle, from pre-trip to post-trip. Among his many questions:

- “Who will be the drivers (and passengers) of the furture as a function of the level of automation provided?”

- “Will people with significant visual handicaps be allowed to drive, and under what conditions?”

- “Will people with other limitatiions (e.g., cognitive limitations more common in elderly drivers) be allowed to drive?

- “Will the skill of operating a manually-driven vehicle be lost?”

- “If people are not driving, what will they do?”

- “You will not get useful answers about what people want or need if it is beyond their experience (such as the use of an automated vehicle).

Following the presentation, the knowledgeable industry audience fueled a lively Q&A (and comment) session.

Q (Tom Seder): Do we risk replacing human error with design error?

A (Silviu Pala): Design error or perhaps manufacturing error? Quality will be even more important.

A (Paul Green): It’s very hard to get enough data on low-probability events. NASA had to deal with that. We’re not prepared for the multi-point catastrophe.

Q : What about Uber as a bomb delivery vehicle?

A (Omer): This group probably has the least possible technical expertise to answer your question.

Q: Does higher duty cycle imply many fewer cars?

A (Omer Tsimhoni): All cars will not be replaced. Perhaps 10% of vehicles will be replaced by Uber-type vehicles. It will be a mixed situation.

A (Pala): Who is responsible for driving the car? If it’s the driver, all of the problems are HMI design problems. If it’s the car, it is an entirely different problem.

Q: Autonomous trucks?

A (Green): The Peloton Company is looking at running trucks in pairs or platoons for fuel savings. Truck drivers are getting older, and fewer people are entering the business. Expensive sensors are easier to do in trucks than in passenger vehicles.

A (Bob Donofrio): Many trucks are “tethered” to home base via GPS, so base always knows where the trucks are.

Technical Conference – Day 2

The technical conference resumed on Wednesday. Here’s a sampling.

Q&A. Bob Donofrio asks a question of David Rousseau. (Photo: Ken Werner)In “Turning Dust into Light” by Philip Pust and colleagues, presenter David Rousseau described OSRAM’s next generation of LEDs for backlighting. These LEDs replace the conventional broadband yellow converter phosphor of conventional “white” LEDs with a red and green phosphor, which can increase the coverage of the NTSC gamut from 71% to 87%. The authors compared the effects of two different red and green phosphor combinations. Rousseau commented that HUD gamuts are typically less than direct-view gamuts.

Q&A. Bob Donofrio asks a question of David Rousseau. (Photo: Ken Werner)In “Turning Dust into Light” by Philip Pust and colleagues, presenter David Rousseau described OSRAM’s next generation of LEDs for backlighting. These LEDs replace the conventional broadband yellow converter phosphor of conventional “white” LEDs with a red and green phosphor, which can increase the coverage of the NTSC gamut from 71% to 87%. The authors compared the effects of two different red and green phosphor combinations. Rousseau commented that HUD gamuts are typically less than direct-view gamuts.

Q: Why is a wider gamut a concern since auto makers not yet pushing HCG and HDR.

A (Rousseau): As consumers become increasingly accustomed to HCG and HDR on their tablets and phones, they will expect the same in cars.

Atul Kumar and his colleague spoke of the benefits of Corning Gorilla glass for auto display cover lenses, and reported on mechanical tests vs. other materials. Among their conclusions is that cold bending “of Gorilla glass offers a low cost alternative to form 3D cover lens; preserves pristine fusion formed surface, and allows a functional coating to be applied to the surface while flat.”

3M Addresses Sparkle Problems

In “Anti-glare and Sparkle Solutions for Automotive Displays by Brett Sitter and his colleagues from 3M, the authors observed that the non-uniform light leaving a display’s pixel array can interact with the anti-glare surface to produce sparkle. 3M’s solution is to sandwich a very fine diffraction grating between two layers of optically clear adhesive and exterior “liners.” The optic evens out the sparkles without a substantial negative effect on resolution. “The new anti-sparkle technology can be implemented with any anti-glare surface.”

Summary

This was a lively and content-rich conference that covered displays for automotive applications and their essential interaction with HMI’s for the current and coming generations of increasingly intelligent and interconnected vehicles.