We have a quieter week this week in the mobile area, while the large display side has plenty of news because of the NAB show. I have attended NAB in the past and much of the coverage is similar to IBC. However, the influence of Hollywood and the cinema industry is much stronger at NAB and Chris has a great report from a pre-NAB event run by SMPTE. (The highlights are on the website for MDM subscribers Highlights from the Technology Summit on Cinema).

Last week, I wrote about the desire I had to have a camera that includes depth data after a demonstration of the Intel RealSense technology. I was very smug, therefore, when I read this week that Apple is to buy a company called LinX Computational Imaging of Israel. The firm has some technology that can take images from multiple different cameras (which can be of different types) to get over some of the current limitations of standard smartphone cameras. The use of multiple cameras allows depth maps to be captured.

(Apple, of course, previously bought PrimeSense, which has depth tracking, but this uses a different approach).

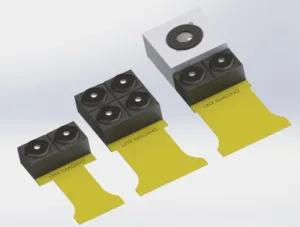

The LinX technology also allows some clever things to be done by using multiple cameras – for example by using multiple monochrome cameras rather than using those with Bayer filters you can, at least in theory, capture a lot more of the light for better performance in low light situations. You could also design an array with a combination of colour and monochrome cameras or combining fixed focus and autofocus images.

LinX itself claims to deliver “SLR-type” imagery. We suspect that by this the company means images with shallow depth of field. We would have liked to have got more information, but it looked as though, at the time that I was writing this, the company’s website had been under attack. Links that worked when I first saw the story had stopped working, presumably after an overload from those interested in Apple!

The technology reminds me of what I saw at IBC from the Fraunhofer IIS in Erlangen, which is developing multi-camera arrays, although its application was more in the area of professional content creation (there’s a video at Fraunhofer Explains its Virtual Camera Technology at IBC 2014). These techniques tend to have very high computational requirements and I would suspect that this would apply to LinX as well. Given that Apple paid only around $20 million for LinX (according to the WSJ) it may be that this is also a barrier for the engineers in Israel. Certainly, if LinX could do what it claims using today’s smartphone processors, I’d have expected Apple to have had to pay more.

Of course, this is a camera technology, not a display, but it seems to me that a big development in image capture with depth would make a big difference to the availability of good 3D content and that has the potential to get the move to 3D displays going again, although there needs to be some time for wounds to heal. One of the big barriers to 3D was that content creators could not use the same workflow for 2D and 3D, whereas for higher resolution, the same cameras and workflows were possible. Adding depth at low cost really helps.

Bob