Thanks to Jon Peddie for inspiring today’s Display Daily and apologies in advance – it may be a bit of a rant! He made me realise that it’s quite a long time since I wrote about a pet topic of mine as, from here, it looks as though progress has stalled and that’s a shame.

I’m talking about gaze recognition (or ‘eye tracking’). As long ago as 2006, in a CeBit report, I first wrote about the need or desire to have a good gaze recognition system at low cost on a PC. There was lots of activity about 10 years ago but things don’t seem to have moved on in recent years.

I have said many times over the last decade that using a PC should be improved. I saw my first ‘WIMP’ (Windows, Icon, Menus, Pointer) graphical user interface (GUI) around forty years ago. I was at a trade show and saw an ICL Perq workstation, based on a US design and sold in the UK by ICL. I was impressed. Then along came the Apple Lisa and, in 1984, the Macintosh. Those computers set the standard for operating a computer with a graphical display, a mouse and a keyboard. Today, my PC is still operated by those devices. Each is dramatically better than what was around 40 years ago, but most of the time, I don’t use anything else (although for some graphics applications, I occasionally use a graphics tablet). I use voice technology to talk to Alexa sometimes, but don’t use voice regularly on the PC except for Zoom meetings and nor do most users.

A Perq workstation at the Chilton Computing Centre – and with a graphics tablet! Image: (c) UKRI Science and Technology Facilities Council, available here

A Perq workstation at the Chilton Computing Centre – and with a graphics tablet! Image: (c) UKRI Science and Technology Facilities Council, available here

So for forty years, the interface and the way of operating or driving my PC it has not changed. Jon Peddie the other day said in an email to me that he wants the cursor to appear where he looks (Jon has three very big, very high resolution displays on his desk). That’s a function that I saw demonstrated seven or eight years ago by Tobii, the leader in gaze recognition technology. I had a brief demonstration at CES of an integration of its gaze technology into Windows. In the demonstration, the cursor could be moved by simply looking at a point on the display. The centre of a zoom on Google Maps could be set by just looking at a point on the map. When I got to the bottom of a page I was reading, the display scrolled up automatically. I was hooked. At the time, I remember thinking ‘If you know where I’m looking, you know what I’m interested in’. That really helps software to be more intuitive to use.

I’m in the school of thought that believes that one of the key strategies of Apple that paid off in the huge success of the iPad and iPhone was to add sensors in the form of cameras, face recognition, compasses, GPS etc. However, although massively more sophisticated, my PC has no more external sensors than my first PC with a mouse. It would be great, I thought, if gaze could be another standard input method, not to replace what we already have, but to supplement it. It has been described as the ‘fifth modality’ of input on a PC (Sad News – Steve Sechrist) wrote an article on that four years ago (Eye Tracking the ‘5th Modality’ in Human Interface). However, there are challenges.

First, deciding exactly where I’m looking turns out to be quite different. We humans are very good at working out where others are looking fairly precisely. We know when someone at a party is looking over our shoulder. It is also said that ‘the eyes are more eloquent than the lips’. (perhaps George Bush should have said ‘watch my eyes’, rather than ‘read my lips’?) However, it is hard to accurately track the eyes unless you have very, very high resolution images.

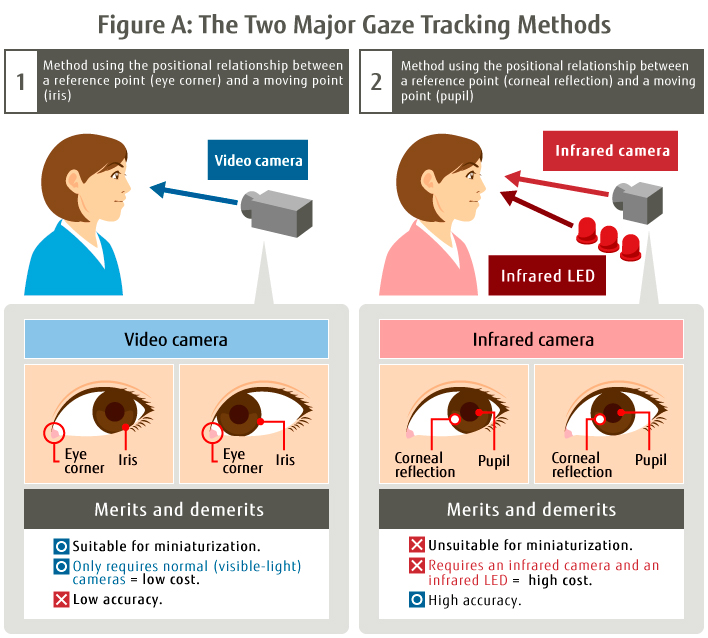

There are two basic ways of eye tracking (according to Fujitsu). One way is to simply look at the position of the iris and a fixed point on the face, such as the corner of the eye. That can use a straightforward camera, although it needs to be very high resolution to get an image, but even so, it is not so accurate. A better way is to reflect something off the cornea of the eye (where the lens is) and infrared is used in gaze recognition systems. Infrared gives better differentiation between the pupil and the corneal reflection. It also has the advantage of not being visible to the user of the system.

Image:Fujitsu – see this page for more background on this diagram.

Image:Fujitsu – see this page for more background on this diagram.

If you have a head-mounted display, you can fix the gaze system relative to the user’s head and eye, but it’s a bit trickier than that if the user is sitting at a monitor. Then you have to be able to do a lot of geometry calculations very quickly and very accurately. If the head and eyes were in a fixed position relative to the monitor, it would be easier, but they are not. I can fixate on a point on the display and move my head a lot without interfering with my vision.

Chickens & Eggs

Gaze takes a lot of processing and that either means using a lot of the main processor cycles. That in turn either means a lot of power consumption, reducing battery life, or needing dedicated processing chips. When you need dedicated chips, you are in a ‘chicken and egg’ situation. Without widespread adoption and high volumes, the chips are expensive. If they are expensive, there is not widespread adoption. You need someone to commit to buying a lot to drive cost down. (I remember hearing that GPS becoming available in smartphones was because of a mandate by the Japanese government that phones had to have them to help in rescue after earthquakes. As a result, every handset maker had to include the technology if it wanted to sell in that country – which they all did. So it became a standard feature, hit huge volumes and became cheap).

Not long after I was blown away by the Tobii system, the firm made a low cost development system to encourage the use of the technology and I eagerly signed up. However, the bar that I fitted didn’t work very well and I found that the problem was that it really couldn’t cope with the size of monitor that I had. It was limited to, if I remember correctly, around a 21″ monitor and there was no way I was going back to that.

After this experience, I did push lots of my consulting clients in the monitor business to look seriously at gaze technology and in 2017, Oculus bought Eye Tribe, a Danish developer of gaze technology and Apple bought SMI another of the top companies in the field. (Tobii is the leader and has investment from Intel and others). I was very excited that gaze might be hitting the mainstream. To be fair, Tobii has been promoting the technology for gaming and has worked with Alenware, Acer and MSI to integrate eye tracking into notebooks.

On the desktop, though, there is an add-on tracker (version 5) but it costs $229 (back to the chicken/egg problem) and it only works on displays up to 27″ (16:9) or 30″ (21:9). That wouldn’t suit Jon.

Tomorrow, I’ll carry on with this topic (note that we’ll count the two articles as just one for free article tracking purposes. Remember, there is a new low cost option to get unlimited access to Display Daily articles). (BR)