During the Facebook’s F8 developers conference Mark Zuckerberg announced the closed beta release of an AR platform that seems to be modeled on the Snapchat augmented reality app, ‘Lenses’. Facebook admits that it wrongly believed that AR needs a dedicated headset to make an impact with consumers. Admittedly he agrees that it may take 5-10 years to get to the form factor for AR glasses that is acceptable to the consumer.

Now the firm is focusing on the smartphone as the first device to push AR into the hand of the consumer. To be more precise, they are looking at the camera as the key to creating AR-type content by applying certain add-ons to the captured images (including video) for easy distribution. In their eyes, the camera will be the first augmented reality platform.

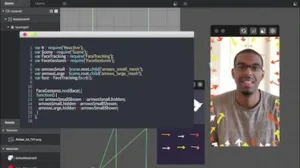

Facebook AR Studio

The new camera effects platform contains a ‘Frame Studio’ and an ‘AR Studio’. While the Frame Studio is more of a Photoshop-type application that adds certain content to the images, the AR studio is only available as a closed beta release to developers. This version will allow the developers to create apps for consumers that enhance the image and video capabilities of the smartphone. As you can see in the second image, this platform is pretty powerful and allows for face tracking features as well as using sensor data and scripting APIs. Facebook believes that these are the tools that developers need to make the camera the AR platform they are expecting to develop on in the near future.

On a more technical level, this platform does contain some pretty sophisticated tools such as Simultaneous Localization and Mapping (SLAM)*, 3D effects, and object recognition. It will take some time to roll out these technologies to the public and Facebook expects it to take up to ten years for all of these ideas to become widely available. Interestingly, these tools are based on decades of development in computer vision and AI.

Facebook AR Studio in action

Facebook AR Studio in action

In addition one can create effects for Facebook Live. They have made available two effects for Facebook Live created with AR Studio, which can be experienced in This or That and Giphy Live. – NH

Analyst Comment

*SLAM is a critical part of A/R going forward as it enables the mapping of the real world to the view seen by the user without needing tracking hardware. It’s an impressive part of, for example, the Microsoft Hololens, but takes a lot of computing power, which will be a challenge for light A/R viewers that look like ‘regular’ glasses. (BR)