In Displays for the Brain – Part 1, the basic relationship between the inputs and the brain was described in non-biological and non-physiologic terms. The basic idea behind the concept is “it is not what the message is; it is what we think the message is”.

With this in mind, I demonstrated with some audio and some video examples. The terms “convergence” and “crossing the eyes” were used in general to describe the un-natural movements of the eyes. In reality, some of the situations require the eyes to “diverge” and “un-cross”. Ah! I just realized why the term “vergence” is used. In part 2, I would like to continue exploring how we make the brain work hard in other type of displays.

For All 2D, 3D, Light Field, VR, AR, and Immersive Displays, the Brain does Most of the Work

First consider stereoscopic 3D displays, with two 2D images. For an optimum viewing experience, the viewer will have to position their eyes at the same position as the stereo camera. If the viewer looks at the images from a different position, e.g. slightly toward the right side of the images at 30 degrees, the 3D images will have the same perspective, but the perceived 3D image is distorted. Each of the 2D images will be changed from rectangular to trapezoidal in shape and there are perceived dimensional differences between the left and the right images, in addition to the difference in perspectives.

With some effort, using brainpower, the viewer will still be able to form a perceived 3D image but that would get progressively more difficult as the viewing angle gets larger. Multi-view stereoscopic system using lenticular lenses will relieve the situation somewhat by providing several more views with different perspectives.

Besides the locations of the viewer, the interpupillary distance also plays an important role. I recall watching a 3D movie in the IMax cinema at the Sony Center, Potsdamer Platz in Berlin, Germany some years ago.

The IMax in the Sony Centre in Berlin

The IMax in the Sony Centre in Berlin

One scene that stuck in my head was that a group of people and a couple of camels were walking in the desert. The resolution of the screen was very high, but I perceived that the whole scene was right in front of me, within arm’s length. I did not have the feeling of riding in an airplane watching the camels and people from a distance.

If I had viewed through Augmented Reality goggles, I would have said that the desert was on my desk and the camels and people were walking on top of the desk. I was very disappointed having such visual effects presented in this supposedly high-tech location with an apparently sophisticated setup. I thought about this afterwards and concluded that the two cameras must be quite large and the separation between the lenses between the left and right camera must be much larger than the normal interpupillary distances.

I, as a viewer with such a large interpupillary distance, became a giant looking at the little camels and people walking in the desert. I thought about this further and decided if I can be a giant by simply spacing out the cameras, I can also be the Kid shrunk by the Mad Scientist, seeing the world with giants by narrowing the distance between the cameras, small cameras. Indeed, I did an experiment by placing a few small objects on my desk. Using a camera with a macro lens, I took two pictures with a small separation between the shots, imitating the left and right eyes of the shrunk Kids.

I put the images next to each other and view them with my eyes “crossed” (I meant diverged) and indeed, I “felt” very small and “saw” giant 3D objects. From this simple experiment, indeed, there is another dimension one can explore in creating 3D experiences from two images or multiple images in one-view and multi-view 3D presentations. This is another way to fool our brain into making us feel shrunk seeing the big world, or becoming Hercules, lifting up the earth.

During the transition from standard definition to high definition, many TV programmes were produced using multiple cameras with both standard and high definitions. I watched a golf tournament on an HDTV some years ago and found that, based on the views from various cameras, I figured that there were about five cameras used simultaneously. The final video presentation was produced from footage taken and spliced from these five cameras.

After a while, I noticed that resolution kept changing back and forth between standard and high definitions, but I was not aware of this until I felt bored with the game and started to wear my display technical hat and tried to evaluate the quality of the video. While I was immersed in the game itself, I was not aware of the changes in definitions of the display images. In the same way, to a certain extent, the perception of speckles in laser projection systems is also diminished when the viewers are immersed in the content of a presentation such as a movie or video game.

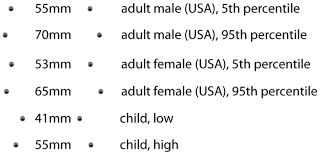

Another aspect of the brain involvement is the aspect ratio. We know the TV characters so well that when the 4:3 images were shown in the 16:9 format, they all got “fat”, we did not notice. On the opposite end, when the 16:9 images were shown in standard 4:3 format televisions, they all got “thin” and we didn’t notice that, either. I am sure you all know who “they” are in these 3 pictures; ranging from thin, normal, to fat.

A friend of mind worked on a “Virtual Reality” project capturing 360º footage at scenic locations using helicopters with a 360º camera located below the helicopter. Such footage would be viewed by a Virtual Tourists located in the comfort of a shopping mall or at home, wearing VR goggles. In my opinion, this type of VR does not provide 3D images, as the flat 2D images will be displayed in both the left and right eye channels. In addition, the viewer will be able to move their head around and see the desired view at the desired direction.

On the other hand, if the viewer moves their head from one position to another position, the perspective will remain the same, as if the viewer is still stationary. This means that if VR with movement is needed, something different needs to be done. Light Field systems usually claim or mis-understood by users, that the viewer can move their head and see different perspectives. It is actually correct as long as the viewer’s translational head movement is smaller than the optical aperture of the system, as perspectives outside the optical aperture cannot be obtained.

Many gamers would not be aware of such limitations as the scenes viewed by the gamers are generated from graphical objects where the flat images are calculated based on the viewer’s position, almost any positions, in real time. Of course, 3D images can be artificially generated from 2D images by “shifting” perspectives using interpolations, extrapolations, predictions, etc.

Let’s look at the new type of “cartoon”, e.g. Toy Story, produced by Pixar. Since all the characters are actually solids in the digital world, the 2D movie was calculated based on one eye placed at the locations chosen by the director. The generation of the 3D version, in theory, can be produced quite easily. The second perspective can be calculated for the second eye place at the ocular distance from the first eye. The final stereoscopic image can then be presented and viewed using standard means.

As a matter of fact, the same movie can be seen by a giant or by the ‘shrunk kid’ by changing the interocular distance before the generation of the second perspective.

I have been to Time Square in New York many times. Every time, I was amazed on the sizes and varieties of various digital LED displays. The display real estates were, and still are, so expensive that there is no unoccupied area. In particular, there is an LED display covering the outside wall of seemingly, an apartment or office building with several windows. As a result, the windows become holes in the display and are dark without content.

Interestingly enough, when video images are displayed, the windows become “invisible” and I had the illusion that there was no window until the video got to scenes with little or no movement, when the windows appeared again as holes. This flipping of perceptions in the brain reminded me about the silent radios in the old days, which can still be found in some areas.

“silent radios” displayed news using matrix signs

“silent radios” displayed news using matrix signs

When I read the content of the displayed messages, I perceived that the characters are moving across the display in a “continuous” manner. On the other hand, when I stared at a particular area, especially near the edges, I do not see the content of the displayed characters. Instead, I see random flashing of the LEDs.

From these experiences, it seems that our brain are well adapted to see two realities, the real and the virtual. In the silent radio situation, the real reality is that the LEDs are flashing. The virtual reality is that the displayed characters are moving from the right to the left continuously.

With the advancement of the Virtual Reality and Augment Reality worlds recently, the images are presented to the viewers in such ways that the brain is directed from the real realities to the virtual realities.

For closing, I have some food for your thoughts. Philosophically, VR goggles allows us to perceive a virtual reality. The question is, are we the VR goggles of some higher beings?

The author can be reached at [email protected] if you have any inputs or comments.