The final speaker in the session was Prashanth Halady of Robert Bosch, where he is the Director of the Center of Competence, HMI (Human Machine Interfaces).

The final speaker in the session was Prashanth Halady of Robert Bosch, where he is the Director of the Center of Competence, HMI (Human Machine Interfaces).

Bosch’s business covers automation, consumer and automotive products but his topic is the evolution of the automotive cockpit.

He started by looking at how the cockpit has evolved and this is driven by software and electronics. It has changed from being based on mechanical to simple electronics, but then it has gone digital and the future will include flexible displays on many surfaces in cars and trucks.

To illustrate the potential of augmented reality, he showed a video from Ikea which demonstrates how an app from the furniture company can be used with a recognition system that can detect an Ikea catalogue, to superimpose images from the Ikea catalogue on images captured in a phone or tablet.

Although automotive applications have their own development path, they are influenced by the CE world and this means, in the display world, a boost in resolution, colour etc.

However, there are many challenges in the automotive world such as automatic “fail safe” apps as well as environmental issues – and it typically takes five years to really integrate a new consumer electronics application into a car.

Halady really likes augmented reality head up displays which can add extra information to the driver’s view, especially if they can avoid taking the driver’s eyes and attention away from the road.Augmented HUDs in cars are an important next step

OLED displays are interesting for automotive applications because of their performance and flexibility. He thinks that 3D and holographic displays are maybe “some way away”.

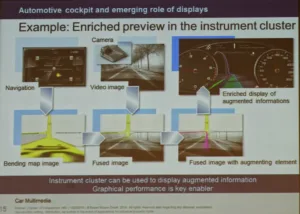

The number of displays is increasing in cars with HUDs, instrument clusters, centre consoles and passenger displays. Side view mirrors can be replaced with cameras and displays. Cameras can eliminate the blind spot problem from traditional mirrors as well as improving fuel efficiency by getting rid of the mirror protrusions from the sides of cars.

There has also been a big improvement in graphics processors and network speeds recently. You can create new visual experiences, but whereas a portable device wants to attract the viewer and involve them, in a car, it may be that you don’t want to distract the driver by more than an absolute minimum.

In the auto industry, there has been a shift from considering individual displays to thinking about an optimised distribution of different information to different people in the car (not just the driver).

At the moment, most content is static content that is designed for the car, but in the future, the systems will be more personalised and will take into account the preferences and driving style of the driver.

From a plain information and entertainment perspective, there needs to be contextual awareness and users need to build trust in the car systems, that they will get the information they need.

When it comes to cars that are to a lesser or greater extent “self-driving”, the passenger may feel uncomfortable if they don’t have complete confidence in the system – as in being a back seat driver. For example, there probably needs to be a clear indication that the car is aware of hazards so that the driver doesn’t feel the need to react. The car needs to provide comfort that it is doing a good job and is in control of the situation.

The HMI will need to be adapted according to the driver’s state, the environment and the driving experience.

Of course, the engineering has to be right (e.g. fitting HUDs into dashboards) and the price also has to be affordable. In the future, solutions such as adding data from the digital domain to be overlaid on video feeds are likely to be early applications with wide adoption.

In response to a question – Halady said that there probably will be regulation of what can be put on the screen, but there will be lots of high res video in the instrument cluster and there will be a lot of the combined use of audio and video triggers. There will be gesture input, but they need to be integrated into the full user experience.

Analyst Comment

In private discussions with Halady, we talked about the really difficult problems of self-driven cars – the question of when and how the car can hand control to the driver. Drivers may be in a very different “state of consciousness” than that needed for driving and it may take several seconds for them to get into the right mental frame and get a full awareness of the situation. It might take several seconds to get into that mode – and that can be a long distance in a moving car. The better the car gets at taking control and the more that the driver trusts the car, the worse this problem becomes. (BR)