With more than 65,000 attendees, NAB came back strong this year as content owners, creators, producers, and distributors weighed the benefits of a new breed of hardware, software, and solutions. Technology analysts like Garrett Research and members of MESA (Media & Entertainment Services Alliance) said attendees were ready to put the pandemic in the rearview mirror, take advantage of some of the new and emerging technologies, and reach more consumers on more screens.

The challenge for us, though, was to separate the bling (like generative AI everywhere) from the real stuff studios, networks, streamers, and creators/producers want and need. It meant we probably missed a lot of what might be good ideas, products, and solutions as we focused on what both sides of the industry equation were doing today and what they would be using tomorrow. Second-guessing yourself isn’t easy! Just because it was so darn interesting, we spent a few hours learning “how they did that” in several behind-the-scenes sessions.

American Cinema Editors (ACE) held a number of very interesting sessions at NAB including an LOTR (The Lords of the Rings) session that focused on expensive work Amazon’s team did to deliver the J.R.R. Tolkien’s epic project to people’s home screens. Other ACE sessions that creation friends had to explain to us included HBO’s behind-the-scenes work on creating The Last of Us series from a video game. It was also great, as were the creative team’s endeavors behind Apple TV+’s wildly popular Ted Lasso.

As we noted earlier, the “theme” of this year’s show appeared to be generative AI because “everyone” seemed to have it, even though discussions in some of the booths left us with the feeling that some of the booth staffs, especially the publicists, couldn’t even spell it. When that happens, we focus on talking with companies and people we are familiar with and know they know what they’re talking about; and when they don’t, they find out.

We spent time with folks at Adobe’s and Blackmagic Design’s booth because the two firms were rolling out new AI-enabled production/post tools that had been used by postproduction folks to help make Jerry Bruckheimer/Joseph Kosinski/s Top Gun: Maverick and James Cameron’s Avatar: The Way of Water these immersive tentpole productions. The technology holds a lot of promise for production and post work but… we’ll see.

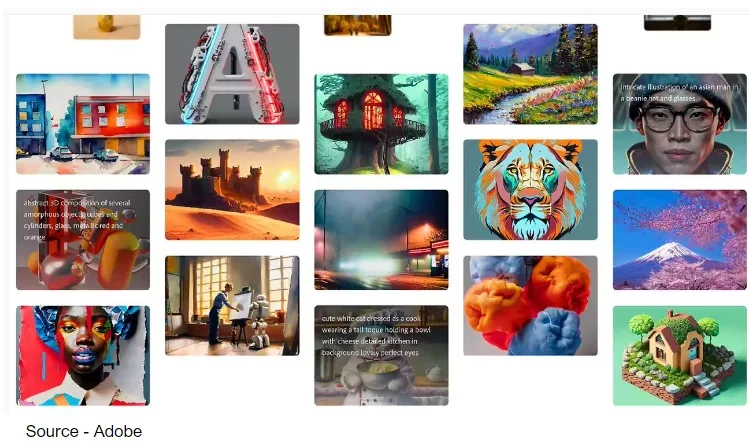

The new Firefly solutions for Premiere Pro have been in development for some time using the company’s Sensei AI program and will even enable editors to mix footage from different cameras, which has always been an expensive, time-consuming challenge. Adobe’s new tools and solutions are part of the company’s Creative Cloud video and audio applications that can create and transform audio, video, illustrations, and 3D models, and ultimately will be used in every step of a project’s workflow.

This next generation of creative tools will make it faster and easier for postproduction specialists and editors to boost project color levels, insert placeholder images, add effects and more, simply by typing ideas into the AI’s text prompt. The algorithm does the rest. Being rolled out this year, the AI enhancements will also enable audio editors to insert background music and sound effects just by typing in what the editor wants to achieve. Adobe team members said that plans call for releasing generative AI features including animated font features and automated B-roll features to analyze script content for producing storyboards and suggested video clips, thereby eliminating a lot of tedious, time-consuming film/show production work.

It was important to see that the company has also expanded its Frame.io video collaboration, Camera to Cloud, and security features, including forensic watermarking.

We admit it, we’ve been in awe of Blackmagic for years but thought the acquisition of DaVinci back in 2007 was one of CEO Grant Perry’s dumbest moves of all times. Boy, did he prove us wrong. Probably shouldn’t have doubted the Aussie because he has always seemed to make his ideas become reality, thanks in no small part to an excellent team of professionals.

The latest version of DaVinci Resolve (18.5) is a major refresh of a product we see in almost every post facility we visit. It has a whole set of new AI tools, over 150 feature upgrades, and even updates to the Cut Page.

There seems to be an enhancement for everyone on the postproduction team in the new version including remote monitoring capabilities.

That probably should have been enough Blackmagic news for NAB attendees to absorb, but there’s a reason they always have a big booth—they need it. They showed off a new 4K switcher, the URSA Mini Pro 12K camera, IP converter, and full deck of new PCIe capture/playback cards. In addition to getting the Mini Pro 12K camera added to Netflix’s approved list, Blackmagic’s president, Dan May, was proud to show off the fact that the widely used Pocket Cinema Camera now has vertical video support. He noted that the downloadable update would enable cinematographers to easily deliver content to outlets in a vertical format. Yeah, it will probably look better than the stuff on TikTok.

While we think of Sony as a content delivery house, we found their content creation activities at NAB to be very interesting. Sony’s virtual production LED solutions and those from the other major players like ILM don’t come cheap, but we aren’t aware of a studio today that doesn’t have one, is having one built, or has one or more on order. It’s a little mind-blowing how these new sets have made it better, faster, more realistic, and more economic to very realistically produce locations in the studio from anywhere in the world… and beyond.

Sony’s solution as well as that of others use high brightness, wide color gamut crystal LED display panels to create a shoot environment without travel, without a seasoned construction crew/costs, and with a major reduction in impact to the environment. Combine the stage sets with today’s advanced 12K cameras and advanced virtual production workflow, and in no time, you’re knee-deep in a great new way to create films and shows. Other than complaining about missing the travel to exotic locations, we haven’t heard anyone on a project crew say they miss the good ol’ days.

As a filmmaking friend of mine said, “It’s rather nice to be home in the evening and watch the kids grow up.”

In addition to the dominating display area, Sony, like many NAB major exhibitors, introduced a wide range of new hardware and software solutions as well as refreshed/enhanced production and workflow products for broadcast, live event, newsgathering, and film/show content creators. They also unveiled new creative cloud solutions for studios and project owners, as well as a more modestly priced cloud for indie and smaller project producers.

We know cloud storage has a lot of advantages, but it’s just hard for us to shake the idea that having all of someone’s creative work in some giant storage facility somewhere is better than having it where you can see it; work on it; and have the raw, insurance, progress, and final copies right here and have backup copies there.

So, we stopped by the OWC booth to see their new Jellyfish NAS solutions and, yes, to catch up with the company’s founder/boss Larry O’Connor to find out what else the company is working on for content creators and teams. O’Connor said that the flash-based shared and portable network-attached solution was designed specifically for the connectivity and speed today’s busy creatives need and their workflows demand.

Jellyfish was designed specifically for 12K, VR, and AR production, and handles the higher resolution and bit rates that the newer technologies required. “It’s really frictionless throughput filmmakers and teams need today,” he added, “because, increasingly, it’s all about capture, create, collaborate, complete, and move on to the next creative project.”

There were more companies, booths, and gee-whiz products at this year’s NAB, but there were three overriding initiatives that we felt would help/impact the global content creation delivery industry—ATSC 3, HDR and AI creep.

During her sit-down with NAB president Curtis LeGeyt, FCC’s chairwoman Jessica Rosenworcel said that despite bumps in the road over the past six years, progress has been made in rolling out NextGen TV (ATSC 3.0). She noted that many of the issues surrounding backward compatibility issues have been solved, so consumers won’t have to either buy a new ATSC 3.0-compatible screen or a special reception box. In addition, she added that the government agency as well as industry leaders have made excellent progress on the transition schedule, with 60% of the US public already in range of a NextGen TV signal and a firm schedule on having it available for the other 40%.

Translation—it’s still a work in progress but … they’re talking.

Speaking of collaboration, Annie Chang, Universal’s VP of creative technologies, told display technology attendees that HDR will be an exciting component in filmmakers’ tool kits this year and should be widely enjoyed by theatergoers this year. Backed by members of SMPTE (Society of Motion Picture and Television Engineers), AMPAS (Academy of Motion Picture Arts and Sciences), ASC (American Society of Cinematographers), and NATO (National Association of Theater Owners), HDR will deliver high brightness, deeper blacks, and a wide range of colors to folks enjoying movies in the 200,000 theaters worldwide.

But the topic that frightened and excited us most at this year’s event was AI, especially when NAB’s president Curtis LeGeyt cautiously talked about the benefits and dangers of AI across the entertainment industry. “In no time at all, AI has gone from being a fuzzy techie idea to solutions that will solve issues for content creation, production, and delivery, as well as permeate the entire economy. Overexuberant implantation could have a damaging effect on international, national, and local broadcasters, as well as journalists and content development, creation, and production,” he said. “AI has a lot of potential that people in the industry can take advantage of to deliver better and more engaging news and entertainment. “But it could just as easily distort the news/stories and displace/replace people who create the diverse video stories people enjoy.”

Guess we’ll just have to see if the technology can be implemented intelligently for the long haul or for short-term profits. We all know that the industry—suppliers and creators—have been through a tough couple of years. Profit dropped about 2% annually during the period. However, it has stabilized and is even showing signs of improving more than 8% this year to nearly $60 billion for technology that is used to create, produce, manage, monetize, support, and store professional media and broadcast content.

Maybe slow, easy, and professional is a lot better than the software guru’s philosophy of “move fast and break things.” It doesn’t really work for them and certainly won’t work for the M&E industry. There are too many things to break and even more to fix.