The addition of metadata in the capturing, production and distribution of file-based content has been a thing for years that has aided in reducing errors and production time while maintaining creative intent to the end user. But broadcasts and producers of live content have not used, and in fact, have resisted the introduction of metadata into their production workflows. However, as HDR production moves into more mainstream broadcast applications, there is an increasing need to have a metadata solution to provide consistent HDR and SDR feeds to consumers.

Sony has taken a leading role in developing a metadata solution that they have now unveiled as “SR Live for HDR.” The SR stands for Scene Referred, a reference to the capture of the light by the camera at the scene.

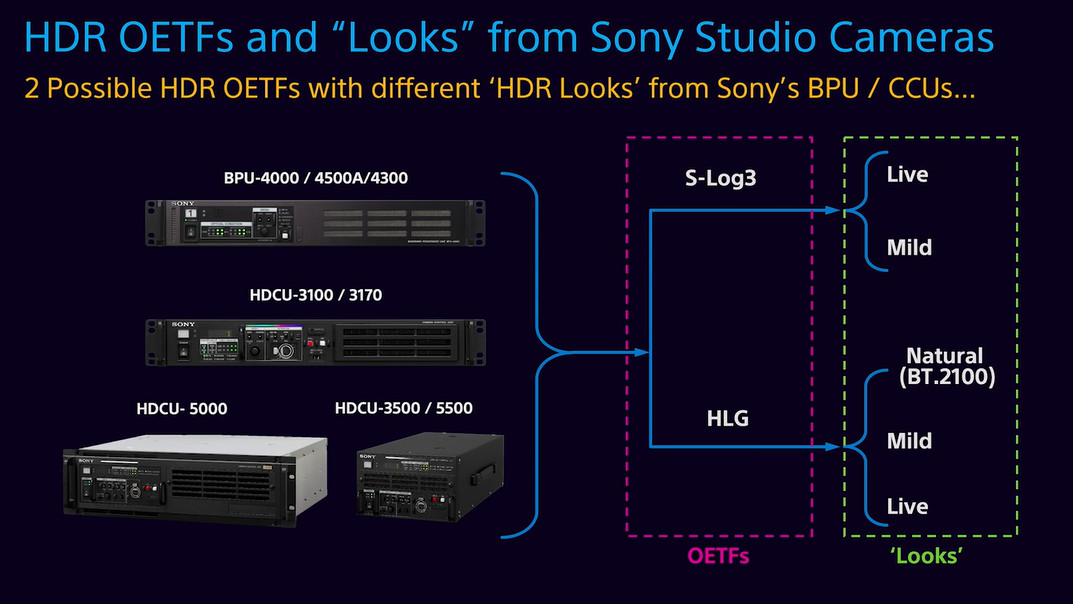

In a video presentation by Sony CTO Hugo Gaggioni called Powering HDR Workflows, Gaggioni started by explaining that Sony’s 4K camera CCUs can output a 4K or FHD HDR signal in S-Log3 or HLG format while simultaneously outputting a FHD SDR signal. Operationally, it has become apparent that camera operators need to “shade” or adjust their camera to make a good SDR image with an acceptable HDR a byproduct. The difference between these two images is called SDR gain by Sony. The reverse, shading for the HDR image has not been proven to create a consistent SDR image as an automated byproduct (and adding a second camera operator running the shader for the SDR image is too costly and not very practical).

In the Sony workflow, both HDR and SDR monitors are reviewed to be sure both signals look good. The final 4K master signal, in an S-Log3 or HLG format, is converted to the needed HDR and SDR distribution formats by Sony’s HDRC-4000 converter. This workflow has already been in use for sometime on a number of live sporting and entertainment events.

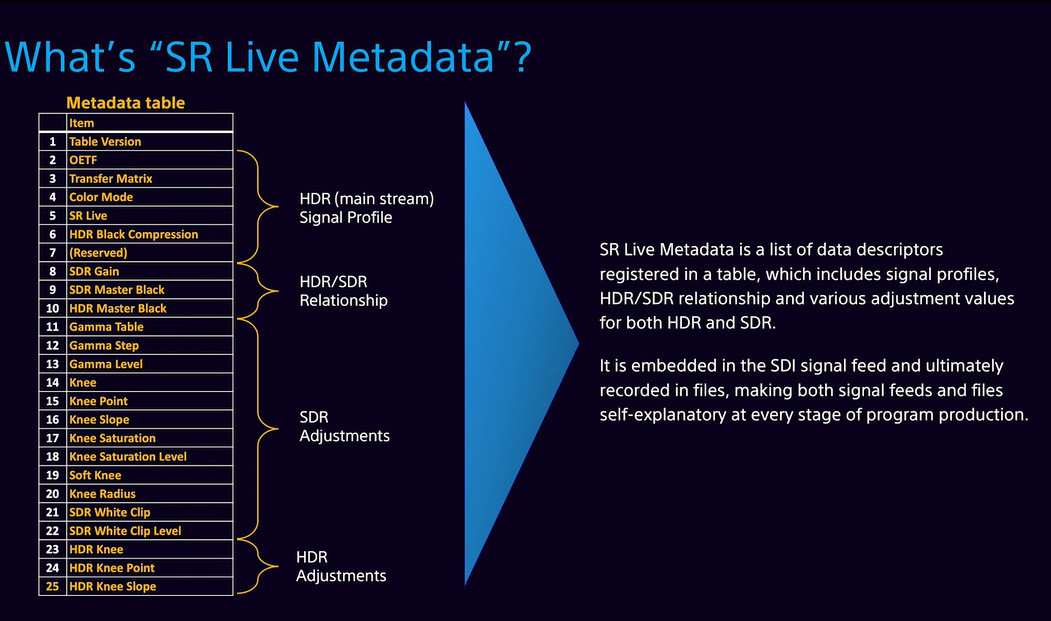

The key to creating the SDR signal in the HDRC-4000 unit is having the correct shader and gain values of every camera in the production. This is only possible if these values can be carried as metadata. Sony’s SR Live Metadata solution captures this data from each camera and embeds it in the header of the SDI formatted signal and storage files. The graphic below shows all the data that is captured. Note that data for the main 4K HDR setting and adjustments are captured along with the shadings done to obtain the best SDR image. This data is also captured with Sony’s 4K HDR camcorders for file-based workflows as well.

In an email exchange, Gaggioni said that Sony has used such metadata for some time but the extension to the more complete SR Live Metadata packet was “requested by some US broadcasters to support the creation of SDR masters (in live productions) when the programs exhibited clips shot in different shooting conditions. While the joining of HDR clips is just fine, the derivation of a proper SDR version is very difficult without knowledge of the settings of the cameras during acquisition.”

He went on to note that,

“Our customers are very enthusiastic about this technical development. While this metadata packet describes the capabilities of Sony professional cameras and camcorders, they are not applicable to other devices. If standardization activities appear in the future related to this area, Sony will be glad to participate.”

The presentation also noted that “side effects” can occur in the mixed HDR/SDR production process. Gaggioni mentioned SDR-HDR-SDR round-tripping as an issue that can create over-saturated (conversion of a graphics) or under-saturated color (conversion of video) depending on the source element. The solution is S-log3 Live and HLG Live from Sony that include several “Looks” to choose from.

Sony is also demonstrating some new monitors dubbed PVM-X series. These will offer full-screen 1000 nits and 4K resolution and include the ability to do conversions like the HDRC-4000 instrument for on-set and field grading. (CC)