As well as our dash around the monitor and virtual production topics at IBC, we stopped in with a small number of other exhibitors that have in the past shown interesting technology developments.

The EBU is always interesting to talk to at IBC as it coordinates and represents the public service broadcasters. In our area of interest the EBU was showing a workflow for HDR and SDR that it has been working on. The initiative to develop a standardised workflow came out of a meeting in Baden Baden, Germany, on the topic. When broadcasters including Sky, the BBC and others, compared notes on their workflows, they found that independently almost everyone had developed almost the same methodology. That suggested that there could be real advantages in standardising the workflow for better automation and to develop better tools.

We wondered if there were issues with different HDR technologies, but it seems that all but one of the participants used either HLG or SLog3 (the Sony EOTF). Only one used PQ but it really was an exception. The workflow is based around HDR with the SDR image being derived from the HDR feed and with multi-outputs of HDR at up to 800 cd/m² for HLG or 1,000 cd/m² for PQ. The experience of the companies was that better SDR is produced this way.

The UltraHD Forum was at IBC to discuss a couple of topics. There is a lot of work going on to reduce the latency of different content, especially streamed content. Viewers and listeners really dislike the kind of delay that can mean seeing or hearing that a goal has been scored one way several seconds before you see it on the TV!

The group was also talking about the fact that some broadcasters, especially those in the US, are using different rendering parameters, such as peak brightness of 203 cd/m² and particular LUTs for sports. On the other hand, regular SDR is graded at 100 cd/m². There has been discussion of how best to convert from one to the other to avoid big changes in the broadcast stream. However, and this was my speculation, without formal confirmation, although I raised a smile, it might make sense to develop a ‘Sports Mode’ for TVs to add to the ‘Filmmaker mode’ that is already implemented by the best TV makers. That is a topic I have previously discussed with companies including Sky which has previously told me that one of the drivers behind the development of the Sky Glass was that set makers were not responsive to the idea of a special sports mode.

The UltraHD Forum is discussing with the UltraHD Alliance about whether something can be done.

The UHD Forum showed the low latency between the two different streams. Image:Meko

DVB was promoting its DVB-I technology which is being used in a pilot in Germany. The trial is using DVB broadcast together with low latency DVB-Dash (HEVC + NGA using AC-4) to deliver hybrid content. The extra layer can add enhanced and personalised services such as immersive audio, higher resolutions, targeted advertising and accessibility services. One of the target delivery systems for the extra layer is 5G broadcast.

That’s a topic that we saw elsewhere at IBC. NHK was showing how VVC can be used to deliver multiple layers of content. It had HD (1 megabit), 4K (9 megabits) and 8K (25 megabits). The three streams can be derived from a single 8K stream and allow simplification of broadcasting in multiple resolutions. It also demonstrated that it could create a good 8K stream at 35Mbps by combining all three streams (which could be delivered via different connections to a set or box).

The technology can also be used to add different content that can be integrated at the set. It had demos of a switchable Picture-in-picture image. It also had a switchable overlay to provide signing for the hard of hearing. NHK also showed how it could use voice recognition and AI to automatically convert the audio stream to sign language.

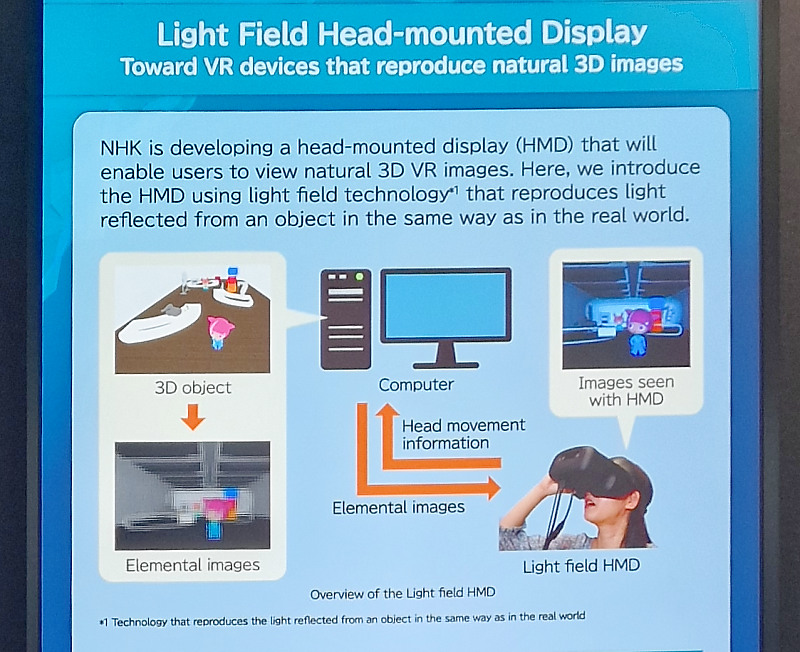

NHK also had a demo of a head-mounted display that was being used to show a genuinely light field image to reduce discomfort. Unfortunately, the resolution was so far reduced that it was difficult to get a good sense of how it would be if you could get enough resolution.

Another company talking about layered codecs was MPEG LC-EVC (based on technology from V-Nova) which is now standardised as a process that can be used with any codec and has been developing its ecosystem. The firm now has a Theo player and an AMLogic STB chip that can support the technology to create 4K content. As well as regular video content, the firm was showing the use of the process in encoding point clouds for VR applications. The firm was highlighting work by Intel and Meta that showed that the LC-EVC layer process reduces the time and bit rate used for encoding. The work was published at SPIE.

Spin Digital is always interesting as it tends to specialise in encoding high resolutions. The company has showing 8K HEVC and 4K VVC live encoding in real time with 60P, with a latency of around 3 seconds ‘glass to glass’. The firm can encode 8K 30 in VVC with MPEG-H audio in real time and is working on higher frame rates.

A name from the display past at IBC was LC-Tec of Sweden. The company was a pioneer of liquid crystal technology ‘back in the day’ and twenty years ago had high hopes for reflective cholesteric LC display. It also had a deal with French bistable display developer Nemoptic, now defunct. I was surprised to see the firm at IBC but heard that it has developed its business away from displays to become a leader in LC-based optical filters. It makes a range of electrically-adjustable neutral density filters for photography and cinematography and is also developing similar technology for augmented and virtual reality. The firm also has fast optical switches for implementing stereo 3D with passive glasses. (BR)