Light Field Display may not be a familiar term to most people. Instead, most of us may have come into contact with applications such as virtual reality viewers, stereoscopic displays, 3-D televisions, 3-D pictures, etc.

Essentially, all these systems are trying to reproduce the “light” emitted by physical objects, or “digital” objects through the use of digital displays, with an optical system to provide appropriate images, separately to the two eyes of the viewer. Such display systems might be created with a large LCD displays using pixel level shutters, from lenticular lenses, two-dimensional lens arrays or near-eye micro-displays with lenses, etc. In all of these cases, two rays from a particular spot at the object will be reproduced and directed towards the viewer’s two eyes according to the position of the object relative to the viewer. As a result, the viewer is “fooled” into thinking that the two rays are from the actual object itself.

There is a big problem. Most objects are not flat and as a result, focusing and convergence become big issues. Let’s understand the operations of the eyes (at a simple level) regarding focusing and convergence.

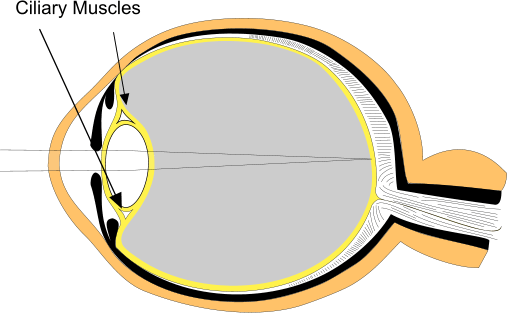

The ciliary muscles adjust the shape of the lens (cornea) in the eye. Image: Meko LtdFocusing deals with adjusting the thickness of the soft lens at the front of the eyeball and the length of the eyeball such that the object to be viewed is in focus at the retina. Depending on the distance of the object, the appropriate muscles will compress or stretch the lens and eyeball accordingly until the image at the retina is in focus.

The ciliary muscles adjust the shape of the lens (cornea) in the eye. Image: Meko LtdFocusing deals with adjusting the thickness of the soft lens at the front of the eyeball and the length of the eyeball such that the object to be viewed is in focus at the retina. Depending on the distance of the object, the appropriate muscles will compress or stretch the lens and eyeball accordingly until the image at the retina is in focus.

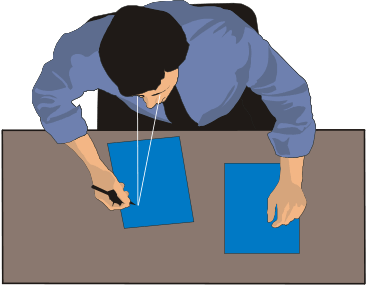

Convergence deals with the line of sight of each eye. When the object is close by, the lines of sight of the left and right eye will cross at a short distance and form a larger angle between them. For a distance object, the lines of sight will cross at a large distance and form a smaller angle. (Image, right)

Convergence deals with the line of sight of each eye. When the object is close by, the lines of sight of the left and right eye will cross at a short distance and form a larger angle between them. For a distance object, the lines of sight will cross at a large distance and form a smaller angle. (Image, right)

The muscles are usually in the relaxed state for far vision and in the contracted state for near vision. As a result, when we read at a close distance for a long time, the eyes can get very tired.

When the viewer is looking at a real physical object, focusing and convergence are adjusted automatically such that a small convergence angle corresponds to distant focus and vice versa. On the other hand, in digital light field displays, regardless of the distance of the perceived object, the eyes are always focused at the display. The perception of depth comes from the level of convergence between the two eyes.

Such an unnatural viewing process requires the brain to recognize the situation and process the signal from the left and right eyes so that the left and right images are merged together forming an image with perspectives based on the “light field” rays, which are all generated at a single focused plane. The brain actually operates like a Graphic Processing Unit (GPU) in a computer and can get very “hot” performing all these processing. As a result, extended viewing of such light field displays can become very tiring and in extreme cases, for certain group of people, it could cause seizures and other medical problems.

It is not uncommon for many viewers, including myself, when seeing a 3-D movie to take a finite period of time for the brain to handle the transition when one 3-D scene cuts into another. Such abrupt changes in scenes do not exist in real life. The speed of scene changes is limited by the movement of the viewer’s head.

Without getting into deep detail, generating the data for the light field display is also very difficult requiring multiple cameras, special software for splicing, and most of the time, requiring human intervention with time-consuming processes. Lytro Immerge introduced a light field image and video capturing system, which requires multiple image devices and a very large image processing computer.

Image : Lytro

Image : Lytro

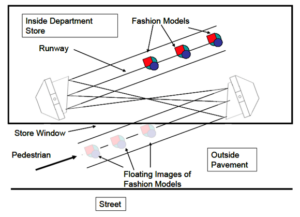

There is another class of less well-known light field display, which is usually classified as a “floating image” display. As this type of system deals with physical objects, rather than digital images, it is called an “analogue” light field display. Such floating image displays usually take the two rays for the left and right eyes, from a point at the physical object and relocate them to another point in space using reflectors/lenses such that the same two rays will emerge from a point in space at another location.

The reflector/lens system has the capability of providing depth information such that the closer points will be relocated closer to the viewer and the further points, further away. As every point at the object is relocated according to the actual 3-dimensional locations, the resulting image will appear to be in 3D and will be floating in front of the viewer.

Most of such floating image systems are based on relocation from one focal point to another, which are only applicable to stationary and relatively flat objects. There is a recent invention in which the object and floating image are designed such that they are not at the focal points with the floating image moving synchronously with the movement of the object. Such a property provides many dynamic applications in the public display and advertising applications. This system is called a 4-D floating display system as a 3-D image will be moving in a fourth dimension.

In this system, the light rays from every point of the image are relocated to another point in space preserving the depth information and as a result, will not suffer the same issues as digital light field displays. The focusing and convergence of the eyes are in their normal “seeing” conditions, which are naturally coordinated. Another advantage is that real physical objects, such as a watch, diamond ring, etc. can “float” without the need for light field cameras, extensive software processing or a digital light field display.

Watch out in your next Halloween. If you see a ghost walking towards you, it could simply be the 4-D image of a “real” ghost hidden behind a curtain. – Kenneth Li